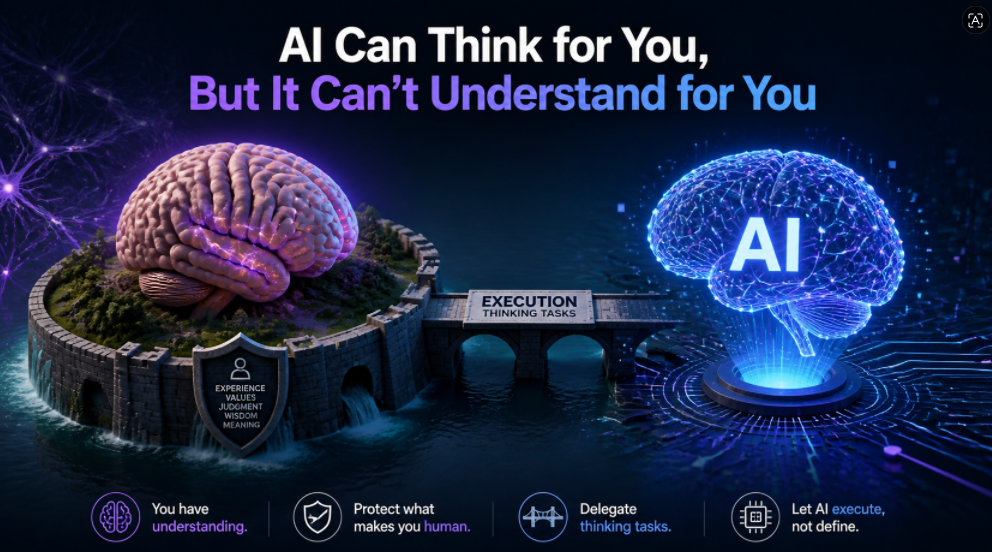

“The only real moat humans have is understanding.”

Recently, Andrej Karpathy, one of the most influential voices in AI, kept stressing a sentence during an interview at a Sequoia Capital AI summit:

“You can outsource your thinking, but you can’t outsource your understanding.”

In plain English, it means this: you can hand over the thinking process to AI, but you cannot outsource true understanding.

At first glance, this sounds a bit like a motivational quote. But in reality, it is highly technical and very practical. It is not saying that AI cannot think. It is also not trying to comfort people by saying that humans still have some mysterious power that machines will never replace.

What it really points out is this: once AI can help you write code, research information, make plans, generate solutions, and even run experiments, human value no longer comes mainly from “doing the work by hand.” It comes from knowing what you are actually doing.

Execution Is No Longer Enough

In the past, a person’s competitiveness often came from execution. If you could write code, build slides, tune models, set up systems, or read papers efficiently, those were valuable skills.

But now, many execution tasks are being rapidly commoditized by AI. Someone who does not know frontend development can ask AI to generate a page. Someone unfamiliar with a library can ask AI to look up the API. Someone too busy to write documentation can let AI draft it first. Things that once took hours, days, or even weeks can now produce a decent-looking version in just minutes.

The problem is that looking good does not mean being correct.

That is exactly why Karpathy’s point about not outsourcing understanding matters. AI can give you an answer, but you still need to know why that answer works. In other words, it is not enough to know what is true. You also need to know why it is true.

AI can generate a piece of code, but you must know what boundary that code might break. AI can give you ten different options, but you must know which one truly solves the problem and which one only sounds polished.

What Understanding Really Means

Here, “understanding” does not mean memorizing facts or repeating concepts. Real understanding is a kind of control.

You know what the structure of the problem is. You know which assumptions cannot be wrong. You know where the system might fail. You know how to verify the result. You know when to keep optimizing and when to start over.

Take software engineering as an example.

Suppose you ask AI to build a subscription system: after a user pays, they should automatically get Pro access. AI will probably generate something like this: it takes the email from Stripe’s payment event, looks up the user by email, and then grants Pro access to that account.

At first glance, this sounds reasonable. The payment event has an email, the user table has an email, and if they match, the membership gets activated. It looks fine.

But someone who truly understands the system will immediately notice the risks. Is the payment email always the same as the login email? Can a user change their email later? Could one email be linked to multiple accounts? Can the webhook be sent more than once? How should refunds and chargebacks be handled? After a subscription is canceled, how should access be downgraded? And what should the real identity key be: email or user ID?

These are not coding questions. They are system-understanding questions.

AI can generate code, but humans must understand identity, permissions, idempotency, state consistency, and security boundaries. Otherwise, the faster the code is generated, the faster the mistakes are amplified.

The Hidden Risk of AI

This is the most dangerous part of the AI era.

In the past, if you did not understand something, you often simply could not build it. Now, even if you do not understand it, you can still produce something that looks like it works.

So ignorance no longer shows up as “no output.” It shows up as “a lot of output, but no idea where the errors are.”

That is why Karpathy’s sentence is actually redefining cognitive labor. AI is excellent at handling local thinking: listing options, drafting initial versions, generating code, summarizing material, and searching for candidate solutions. It makes many low-level execution tasks and mid-level reasoning tasks cheaper.

But humans still need to handle the higher-level work: defining the problem, setting the goal, judging the plan, identifying risks, building evaluation criteria, and ultimately taking responsibility.

AI Amplifies What You Already Know

A lot of people misunderstand AI because they treat it as a machine that can “understand for me.” But a more accurate way to use AI is to treat it as a tool that amplifies and executes your existing understanding.

If your understanding of a field is shallow, AI will amplify your shallowness. It will follow your flawed question and give you a pile of polished but possibly wrong answers.

If your understanding of a system is deep, AI will amplify that depth. You can ask it to break down tasks, fill in details, look for counterexamples, run checks, write tests, and generate multiple candidate solutions. Then you use your own judgment to filter and correct them.

AI does not automatically make an ignorant person wise. In fact, it often makes people feel like they understand faster than they really do.

Why This Matters for Builders

This is especially important for software workers, because the boundary between different kinds of cognitive work is already blurry in software. In hardware, things are often more visible and concrete. In software, the abstractions are harder to see, and errors may stay hidden for a long time.

In the past, a programmer’s moat might have been knowing a language, a framework, or a set of APIs. But now, those things are losing value fast, because AI is very good at checking documentation, filling in syntax, and writing boilerplate code.

What matters more now is whether you understand the shape of the system, the data flow, the abstraction boundaries, which states must stay consistent, which permissions must never cross, and whether a piece of code will still be maintainable three months later.

In other words, memorizing APIs is no longer the moat. The system model is.

The Same Logic Applies to Founders

The same logic applies to founders.

AI makes building demos incredibly easy. In the future, everyone will be able to create something that looks like a product. The hard part is no longer “can you build it?” but “should you build it?” “Where exactly is the user’s bottleneck?” “Is this workflow really worth redesigning?” and “Is the core product judgment actually correct?”

When execution costs go down, judgment costs go up.

That is because your leverage is much greater. One bad judgment used to waste maybe a day. Now one bad judgment can send a dozen AI agents into parallel mode and generate an entire wrong system. The bigger the leverage, the more important understanding becomes.

How to Use AI Properly

That is why the phrase “the only moat humans have is understanding” should not be read as anti-technology or anti-AI. Quite the opposite. It asks humans to use AI more deeply.

Do not just ask AI, “What is the answer?”

Ask instead:

-

What are the core variables here?

-

Which assumptions are most fragile?

-

Are there counterexamples?

-

What is the smallest possible validation experiment?

-

If this conclusion is wrong, where is it most likely to fail?

-

What metrics should I use to judge whether it is good?

These questions are not meant to get a prettier answer. They are meant to help you build your own mental model.

Learning should also move in this direction. Do not just let AI write homework, write code, or make conclusions for you. Instead, use it to break down concepts, build examples, expose blind spots, generate counterexamples, and simulate conflict between different viewpoints.

What Real Understanding Looks Like

At its core, understanding is the ability to keep making sound judgments in long-tail situations where there is no standard answer, or even no usual answer.

If you can compress a complex problem into a clearer structure, that means you understand it. If you can predict what happens when the conditions change, that means you understand its causal logic. If you can identify why a system breaks, that means you understand how it works. If you can teach it from scratch, that means you have not just memorized the wording—you have actually mastered the model.

That is the most important skill in the AI era.

Final Thought

So the most important thing in Karpathy’s statement is this: once AI gives you almost unlimited execution power, do you have enough understanding to lead it?

The future failure mode for many people will not be that they cannot use AI. It will be that they use AI too well, without knowing what they are actually amplifying.

AI can outsource many thinking steps, but it cannot replace the judgment that comes after understanding. It can help you move faster, but it cannot decide whether you are moving in the right direction.

In the end, humanity’s real moat is not remembering a few more facts than AI, or writing code faster than AI. It is the ability to build an accurate world model, system model, and evaluation model.

Then, AI becomes your exoskeleton for execution and search, not your brain.

No Comments